TLDR: not (yet).

I generally tend to shy away from any overhyped topic that is currently trending as there is just too much noise around to be able to find information deeper than clickbaity titles and competing headlines but I have to admit that after trying out GPT-3.5 and after its recent release the updated GPT-4 version myself, my curiosity was tickled. It also made me think about some of the long-term implications of such AI technologies making it into our “normal” business life and software tools.

Web 3.0 had its big promise of decentralization with the romantic overtone of irreversibly taking power away from the banks and big tech companies and trusting blockchains or tokenization to build a more-democratic internet but this seems to be fading. Silvergate Bank (for crypto) and Silicon Valley Bank (for tech startups) were two of the main sources of investor money flow behind Web 3.0 but both banks collapsed recently which inevitably makes me feel like this is a significant mark in the book and its opening the door for a newer chapter.

So, where is the money flowing now?

It took a decade for tech to recover from the dot-com bust and quite similarly cheap borrowing rates disappeared now and investors are looking for the next big hit in tech elsewhere.

Open AI, the company behind GPT really started as “open” and had the goal of developing AI for everyone (and “free of any economic pressures”). However, this has changed along the way and it is interesting to look into the economics of why. Back in 2015, it was kickstarted with USD 1B in donations from famous supporters like Elon Musk. The company has its headquarters in San Francisco and has a stable of 375 employees of mostly machine learning geniuses. My tip would be that it means costs in salaries alone are probably around USD 200M. On top of salaries, let’s think about the enormous computation cost they have. The creation of GPT-3 was a marvelous feat of engineering, its training was done on 1024 GPUs, took 34 days, and the estimated cost was USD 4.6M (in computing alone). It is not hard to extrapolate from that their cloud bill could be a very healthy nine-figure number as well.

OpenAI had received a total of USD 4B investment over the years but with a burn rate of USD 0.5B, and eight years of continuous operation it doesn’t take too much to figure out that they were starting to run low on cash. Luckily, Microsoft has come into the picture and on 23rd of January, 2023 announced the extension of their partnership with a USD 10B investment on top of the USD 3B they already had in the company.

Ownership is split across three groups currently:

- Microsoft currently holds 49% of OpenAI

- VCs another 49%, new VCs are buying up shares of employees that want to take some chips off the table

- OpenAI foundation will control the remaining 2% of the shares

An interesting part of the deal is that if OpenAI does not manage to create success or if we enter a new AI winter, Microsoft is covering the party for them but if OpenAI creates something truly meaningful and manages to repay Microsoft on their terms the foundation will regain 100% control of what they built.

This deal is absolutely genius as it solves all of OpenAI’s problems at once. They have money to research and build new versions, they have access to all the computational power they need through Microsoft Azure and they also get free distribution through Microsoft’s sales teams and their models will be integrated into MS Office products.

Wait, can GPT-4 actually code my application for me?

GitHub (also a Microsoft-owned company since 2018) is easily the most popular code hosting platform for version control and collaboration used by hundreds of millions of software developers worldwide. It has a truckload of essential tools from access control, bug tracking, and feature request tracking to task management or continuous integration (CI/CD) that all make a dev’s life easier.

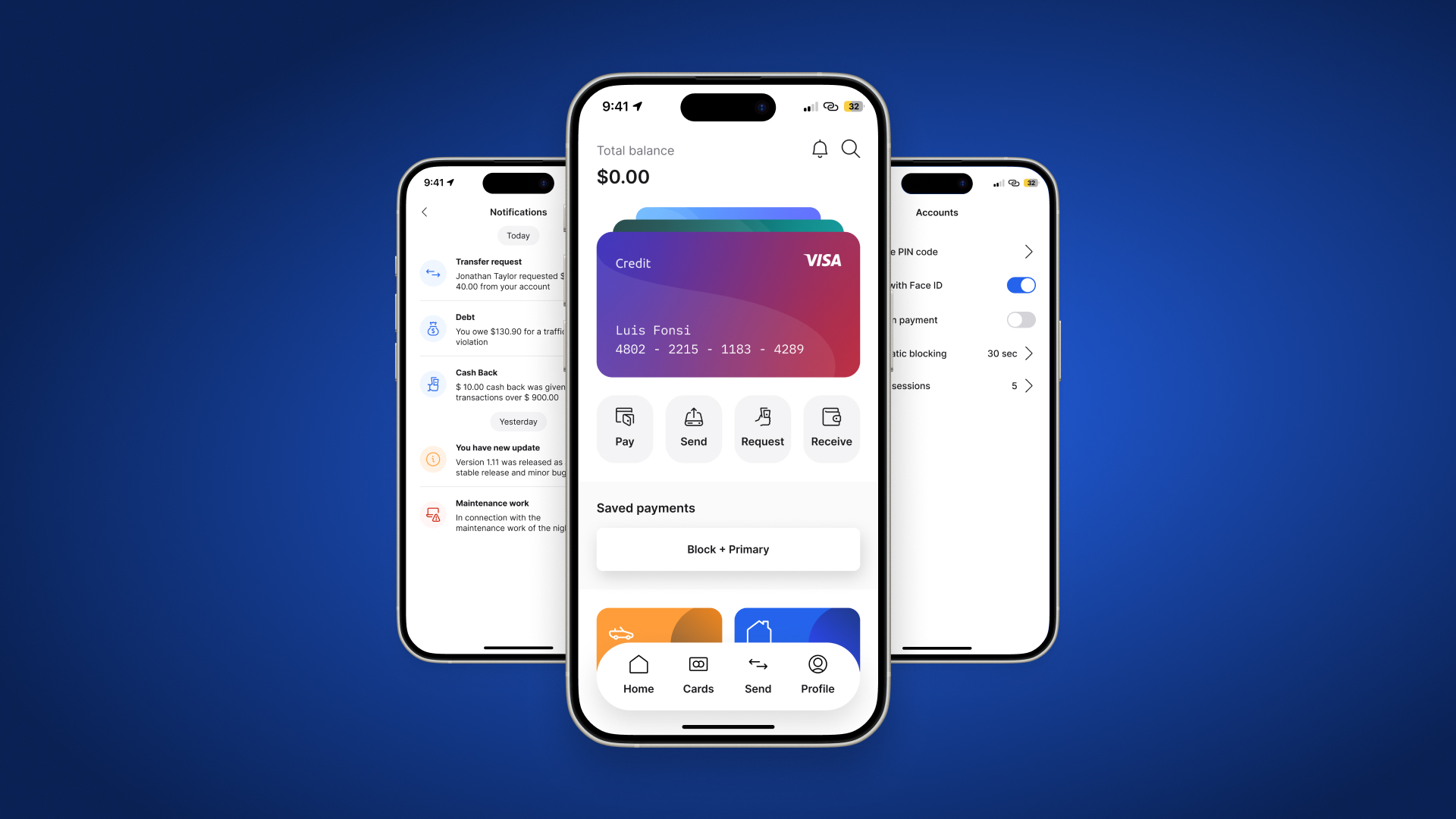

One of the most significant additions to GitHub since its acquisition is Copilot which uses the OpenAI Codex to suggest code to developers and complete code functions in real-time, right in the editor interface.

Meet GitHub Copilot – your AI pair programmer. https://t.co/eWPueAXTFt pic.twitter.com/NPua5K2vFS

— GitHub (@github) June 29, 2021

It is sort of a super-intelligent autofill solution that claims to have a really broad knowledge of how people use software code and promises to be “significantly more capable than GPT-3” in generating software code by drawing context from the code that the developer is working on.

After some initial testing and reading through some more comprehensive reviews (like this one), I tend to agree with the general public, Copilot seems to be a really useful tool but it feels like it is aimed at more experienced developers who already know what they are looking for, know what kind of software architecture they want to build but simply want to speed up their work, maybe eliminate some of the manual searching and more repetitive coding parts.

On February 17th this year GitHub announced the next evolution step, Copilot for Business, a new USD 19 /month enterprise version making it publicly available, after a short beta phase that started last December. When talking to Techcrunch GitHub CEO Thomas Dohmke highlighted a few important things:

- Copilot is now powered by an improved OpenAI-powered model

- While continuously improving the model, features like “fill-in-the-middle” (where the model not only completes a single line but also starts adding words in the middle as it knows what sits before and after the current cursor position) are becoming available.

- The model also looks at related files and leverages adjacent files and adjacent information available that you work on to craft the prompt that’s sent to the model for inference.

- The model now also recognizes common security vulnerabilities in the code that you receive back from the model. If it finds a vulnerability it will automatically jump to a suggestion that is more secure.

- There is also a significant speed bump up to reduce latency and produce more instant hints.

Maybe even more excitingly Dohmke predicts that in the near future, Copilot will be able to generate 80% of an average developer’s code. Compared to today’s estimated 46% across various programming languages and around 61% for Java specifically, so he built quite a significant expectation.

hey gpt4, make me an iPhone app that recommends 5 new movies every day + trailers + where to watch.

— Morten Just (@mortenjust) March 15, 2023

My ambitions grew as we went along 👇 pic.twitter.com/oPUzT5Bjzi

However, if you have been following along during the announcement of GPT-4, in the presentation a demo showed how it turns a very basic sketch on a napkin of a “My Joke Website” into a functional, working website for revealing jokes. Browsing through Twitter today, you can also see some fun examples of ChatGPT and Bing similarly generating not just code but entire applications with just written casual prompts. This is actually enabled by the same models as Copilot, so the obvious question is when these capabilities will arrive at Copilot as the new GPT-4 is more capable than the Codex system currently powering it.

“We think it’s a really exciting feature that the Bing team launched and we have nothing to announce today — wink, wink, nudge, nudge — but it’s very exciting,” replied Dohmke when asked the same question but he did add that their goal is always to help developers do their work more efficiently but current models might produce code that isn’t correct.

What is an LMM anyways?

Generative Pre-trained Transformer or GPT in short is a generative LLM (large language model) that is based on the ‘transformer’ architecture. Such models are capable of processing large amounts of text and learning to perform natural language processing tasks. GPT-3 for example is 175 billion parameters in size which made it the largest language model ever trained when it was created. The model was trained on a collection of text that included over 8 million documents and over 10 billion words (including thousands of books, Wikipedia articles, chat logs, and other data posted on the internet). From the training data, the model learns to perform NLP (natural language processing) tasks and generate coherent, well-structured text. Reinforcement learning (supervised fine-tuning based on human feedback) was also used for training. The AI trainer staff provided conversations in which they impersonated both sides, the user and the AI assistant as well.

On the other hand, the new GPT-4 is a large multimodal model, meaning it can now accept both image and text input as well. How such systems are benchmarked is an equally exciting topic so I definitely recommend checking the official post for the more curious among you: https://openai.com/research/gpt-4

For me a key aspect is the Limitations section in this writeup which quite plainly states that answers (or output) are still not fully reliable. GPT-4 ranks 40% higher than its predecessor GPT-3.5 but it still “hallucinates” facts and makes reasoning errors. Quoting the page: “great care should be taken when using language model outputs, particularly in high-stakes contexts, with the exact protocol (such as human review, grounding with additional context, or avoiding high-stakes uses altogether) matching the needs of a specific use-case.”

“This Copilot is stupid and it wants to kill me”

The Internet anecdote is that when training GPT-3, during the initial tests researchers were surprised to find that it can write code based on information available in the general training data, internet files must have contained usable code snippets and this gave them the idea to train a derivative (Codex) specifically for coding tasks.

For training Codex, OpenAI collected public GitHub code repositories, which were around 159 GB in total. The code base was used as a “text” corpus to train Codex on the language modeling task “predict the next word”. They also created a dataset of carefully selected training problems which basically reflect the evaluation method used and this was also implemented for fine-tuning. To test how well Codex can write Python functions, OpenAI created a data set of 164 programming problems called HumanEval. Each problem consists of a function (always including a doc string) and a collection of unit tests. During the test, Codex is presented with a prompt containing only the function signature and the doc string and its job is to complete the function. Passing all unit tests equals successful delivery and failing at least one counts as failing the evaluation.

In the paper introducing Codex, OpenAI points out a few important limitations:

- There is a negative correlation between complexity and success rate. The doc string complexity is quantified by the number of “chained components” it has. For example, a single component can be an instruction like “convert the string to lowercase”.

In other words: Codex is only good at writing simple functions. - Codex is not trained to generate high-quality code but to reproduce “general” code found on GitHub. This also means that code produced by Codex will include widespread bad coding habits similar to language models perpetuating stereotypes present in their training data.

- The paper says: “We sample tokens from Codex until we encounter one of the following stop sequences: ‘\nclass’, ‘\ndef’, ‘\n#’, ‘\nif’, or ‘\nprint’, since the model will continue generating additional functions or statements otherwise.”

This simply means that Codex would continue to generate unnecessary code even if the block that addresses the problem stated in the prompt is already finished. - Codex “can recommend syntactically incorrect or undefined code, and can invoke functions, variables, and attributes that are undefined or outside the scope of the codebase”.

This also comes naturally from the mechanics of how these ML models work, they stitch together different fragments of code they saw, even if they don’t fit together.

There is a huge difference between generating vs. understanding code and the deep learning model does not understand programming and similarly to all other deep learning–based language models, Codex is just capturing statistical correlations between code pieces.

The quote that provides the title of this section comes from Matthew Butterick, a programmer, designer, writer, and lawyer in Los Angeles who teamed up with a group of lawyers to file a lawsuit that is seeking class-action status against Microsoft and the other companies that were involved in designing and deploying Copilot. In his view, the process of using millions of developers’ public codes simply equates to piracy. This is because the system does not acknowledge its debt to existing work so violates the legal rights of millions of programmers who spent years writing the original code.

Of course, we see a similar pushback every time a significant technology emerges but he also quite harshly critiques the quality of code he could generate when using the technology (albeit with an earlier version of the current model):

“This is the code I would expect from a talented 12-year-old who learned about JavaScript yesterday and prime numbers today. Does it work? Uh—maybe? Notably, Microsoft doesn’t claim that any of the code Copilot produces is correct. That’s still your problem. Thus, Copilot essentially tasks you with correcting a 12-year-old’s homework, over and over. (I have no idea how this is preferable to just doing the homework yourself.)”

A new era of Low-code/No-code – and my key takeaways

Would I trust any version of GPT to write a production website or app for me? – No.

Do I think we will need to hire fewer developers soon because of these new technologies? – Nope.

Do I feel the gate is open now and new models can be trained easily? – Well, there are certainly some tough limitations still.

Do I think it will become really useful in the near future? – Heck YES!

LLMs are infinity app stores in a box.

— ThomPete (@Hello_World) March 6, 2023

With time you will not need to download others apps, you will just build your own highly customized app for your specific purpose simply by describing your problem or task.

The apps and GUI will be created as a byproduct.#AI

Twitter posts like the one above certainly sound futuristic but I do believe that AI technologies can bring a significant shift in our mindset and can circling back to the beginning of this article, bring a new era of the web. One area where I feel the most significant change will come soon is around the Low-code, No-code space. Microsoft is already including the acquired services in their Power Apps and there are many other vendors joining the game. I always felt that current solutions are too cumbersome or too basic for a “real” developer but way too complicated for someone who doesn’t have any programming knowledge and just wants to put together a basic Excel Sheet automation or something similarly straightforward.

By watching this recent video from BurnedGuitarist, you can see that he was able to put together an Overdrive VST plugin with the help of GPT-4 which is nothing short of amazing. However, you can also see that there were a few prerequisites, he was really specific about his requirements, knew the technical requirements for the plugin, understood coding needs, he was willing to have multiple attempts and go back and forth (for hours) and he was also ready to correct the code and add some polishing touches. Even with that, the plugin doesn’t sound like a TubeScreamer pedal (believe me, I have one on my desk).

Probably the best thing is that now the huge popularity of GPT-4 and the endless publications flowing around about it is that they draw a lot of attention to the field, bring new investors in, and make other big players like Google join so I definitely expect some new big breakthroughs in the coming years.

Let’s talk about ML and AI and let’s create something fun as a POC project together. If you want to explore GPT-4 together, just shoot me a line.